In modern manufacturing, traditional industrial robots are trapped by their own rigidity. Every time a task changes—whether switching a component color or adjusting a sorting bin—a specialist must manually rewrite code and perform extensive safety tests. This “reprogramming gap” creates a massive bottleneck, leading to costly downtime and preventing facilities from scaling to high-mix, low-volume production.

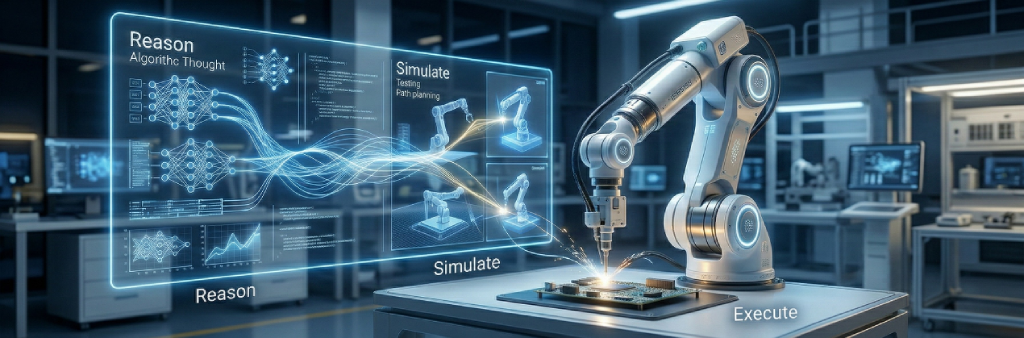

The Brain – Reasoning Layer

The solution begins by replacing fixed scripts with cognitive intent. An operator issues a simple natural language command like, “Sort the red components and inspect for defects.” This request is processed by the Lanner ECA-6051 MGX™ Server, which utilizes NVIDIA L40S GPUs to power the Lanner Lexa Digital Human (HMI).

By leveraging Large Language Models, the system interprets the human instruction and decomposes it into a structured, machine-executable task plan. This transforms the server into the “brain” of the operation, handling complex logic that traditional controllers simply cannot process.

The Digital Twin – Validation Loop

Before a robotic arm moves an inch in the real world, the generated plan is exported to a digital twin environment. The Lanner EAI-I730 Edge AI Workstation, equipped with the RTX Pro 6000 SE, runs NVIDIA Isaac Sim to simulate every motion, object interaction, and environmental constraint. This closed-loop testing ensures the robot’s path is optimized and collision-free. By validating in a high-fidelity virtual world first, enterprises eliminate the physical “test-and-check” cycles that often lead to hardware damage or operational risk.

The Body – Execution with Real-Time Perception and Action

Once validated, the mission is pushed to the Lanner EAI-I351 Robotic AI Computer. Powered by the NVIDIA Jetson Thor architecture, the system utilizes Vision-Language-Action (VLA) models to “see” and “act” simultaneously. With massive Blackwell-generation compute at the rugged edge, the robot identifies objects, understands spatial relationships, and executes precise movements with millisecond latency. The result is a system that doesn’t just repeat a motion—it adapts to its environment in real-time, delivering a 90% reduction in setup time and a seamless transition from data center reasoning to factory-floor execution.

Conclusion

Gen-AI Powered Robotic AI brings together LLM-driven reasoning, real-time vision-based execution, and digital twin validation into a unified, closed-loop system that transforms how robots operate in dynamic environments. By enabling machines to understand human intent, simulate outcomes before movement, and execute tasks with precision at the edge, this architecture eliminates rigid programming constraints and reduces deployment risk.